Optmizing Entity Framework memory usage to bring down service memory usage from 90% (> 8GB) to negligible. Most of the core services needed meaty VMs that had more than 8GB RAM. And these services still under-performed.

Day 0 - July 3rd, 2018

The story started when I saw this ping on the Hyper-V channel.

[03/07/2018 1:11 PM] Ketan Jawahire: When service takes more than 90% of VMs memory then it becomes really difficult to work with that VMs.

PS takes minutes even to single simple statement. 😫

[03/07/2018 1:29 PM] Suneel P: changed all Ad Data Download VMs memory config to Azure config

[03/07/2018 1:29 PM] Ketan Jawahire: I am going to install it on some azure VM & check for log files. Its really difficult to use PS with VMs using 90%+ memory

[03/07/2018 1:30 PM] Suneel P: try using Ad data download vms - 23,28,32,38. they have enough ram now

Here’s our story of a clever hack to reduce the memory footprint of EF’s cache objects (mapper objects, etc.).

TLDR / TIL / Key learnings

- Using Visual Studio 2013 to Diagnose .NET Memory Issues in Production

- 80-20 rule

- Multi-tenant Entity Framework Context

- Synonyms in SQL Server

- Hacking EF to use the same DbCompiledModel across multiple dynamic schemas

Day 1 - July 4th, 2018

I was determined to see why the F do these services consume so much RAM. As long as we were on Azure, we solved this by scaling up the VM or adding a new VM and scaling out the service (I never got far enough to implement VM Scale Sets completely. It stopped at a POC).

But, with this move out of Azure (more on this coming soon!) on to our own bare metal boxes, this is a buzzkill.

So, the journey begins.

I RDP into the VM and find SocialAccountSyncService using ~7GB or RAM (VM’s RAM is 8GB). It was continuously around the 90% mark. That’s not good - it can create all kinds of problems and can make the machine (and hence the service) under-perform. Besides, looking into our internal service stats, I saw that the service was only running a handful of worker threads.

I was quite sure that there was nothing we were doing in those 2-3 running worker threads that should make the service occupy that much RAM. So I started by accusing the service of having a memory leak. On the tracking team, we already had dealt with services that had high memory usage; and we knew the culprit there - Entity Framework (we were on version 5 back then). We addressed this by switching to a much much lighter Dapper. This introduced a little developer overhead as we had to write a few more SQL queries (which we’re very good at) but the trade-off was worth it. We didn’t have to deal with OOM exceptions anymore.

So it was pretty evident that EF would be the cause here as well. But I needed to prove the mem leak, then find it, and finally plug it.

Google search results for ".net memory leak detection tools"

Reading through Using Visual Studio 2013 to Diagnose .NET Memory Issues in Production, I learnt that we could create dump files of the process and then analyze what was in it. Awesome - I should be able to find what’s taking up the RAM space.

I initiated the dump. A while later, I had an 8GB dump file.

My laptop has 12GB RAM (and an i5) - I should be able to analyze the dump right? Wrong - opening the dump in Visual Studio 2017 Ultimate works. But analyze dies because VS runs as a 32bit process - so it crashed when it hit the ~4GB RAM mark while analyzing my 8GB dump.

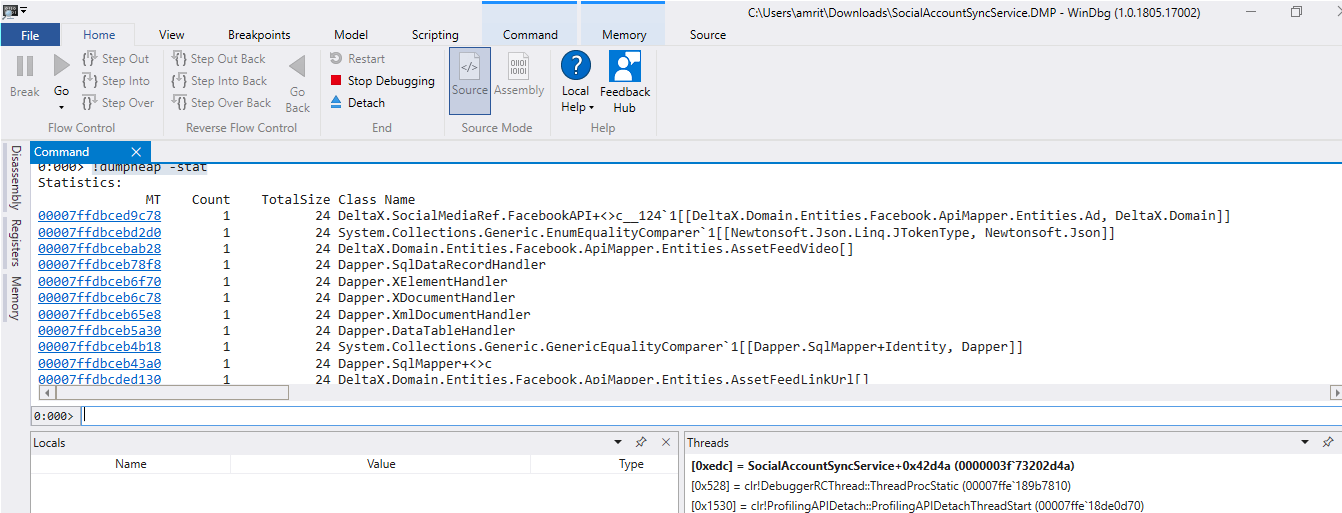

So found an alternative to VS - WinDbg Running the below commands (I have no idea what they actually mean/do :P) pulls out the stats of the objects in the memory heap.

!loadby sos clr

!dumpheap -stat

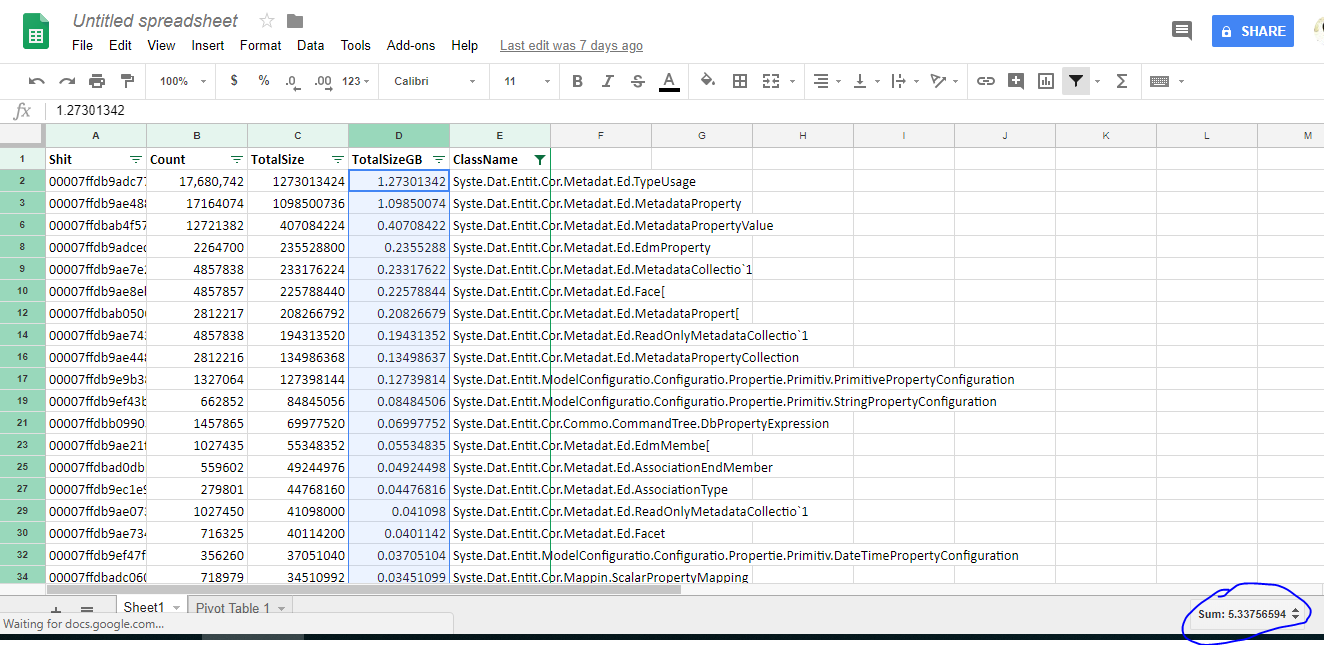

I put that output onto a spreadsheet, applied some filters and found that objects from the System.Data.Entity.* namespace occupied ~5.3GB!

There was my proof that EF was the cluprit. But it’s probably not true - it’s more likely that we had some “sh*tty code” that was causing this leak. Maybe a bad repository class that wasn’t disposing things correctly ?

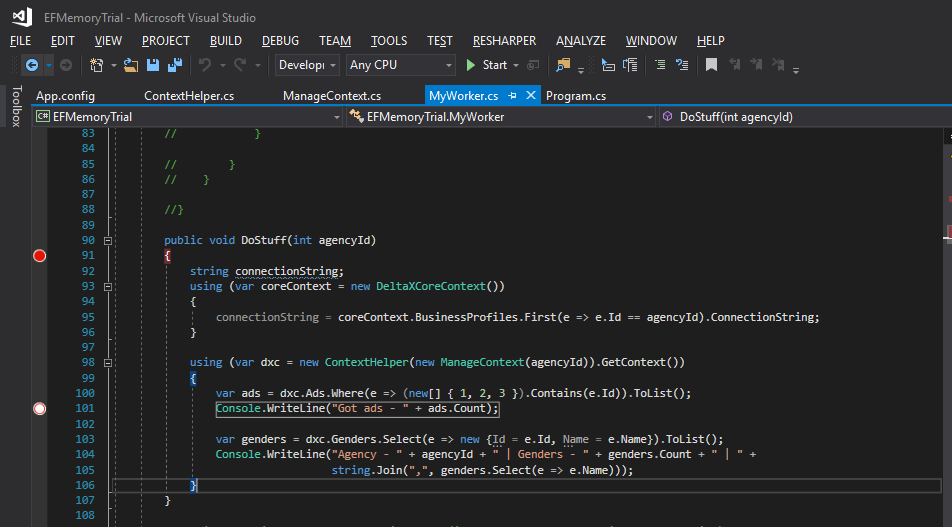

After brainstorming and white-boarding (with the team) about my learnings so far, we decided I’ll try a very simple console application that’ll load the context and see what’s in the memory.

During the discussion, especially with @Vamsi, I was very clear (adamant) on one thing - I wanted to gain 80% benefit by only applying 20% of my effort. So a rewrite without EF was not an option right now.

I read up on Repository Pattern and IDisposable.

I hoped that we had some silly code which was causing the EF objects to not dispose correctly. I read all about managed resources and unmanaged resources

Day 2 - July 5th, 2018

I had my console application ready.

So I kept running it - trying to understand the pattern of the memory leak.

Was every run causing it? Was every invocation of the EF context causing it?

Repeated runs and some more pairs of eyes later, we found that objects of type DbCompiledModel kept growing with each run.

VS’s heap diff helped tremendously - allowing us to compare memory snapshots of before and after.

Akshay, Ketan, Krishna and I also black-boarded some thoughts on disposal and repo patterns - since I was hoping to find the culprit there.

In short, I think - if your class has a member that implements IDisposable, your class also must implement IDisposable and ensure that you dispose off that member.

// TODO - Video of memory profiling and debugging in VS

So what’s DbCompiledModel anyway?

Well, DbCompiledModel contains the mappings between our app’s POCO classes and the database tables.

So why is it continuously accumulating in the memory and not being disposed / garbage collected? Well that’s what we need to find out!

What’s interesting is that after the first run, there are 2 instances of DbCompiledModel - one for each DbContext, i.e DeltaXCoreContext and DeltaXContext. On the second run, one more instance of DbCompiledModel gets added to the heap. Third run adds another. And this goes on!

But why does only 1 instance of DbCompiledModel get added every run? Shouldn’t it be 2?

It should - but it feels like the DbCompiledModel of DeltaXCoreContext isn’t leaking. There’s just one instance in the entire app.

This also makes some sense - because DeltaXCoreContext is a textbook EF context.

public class DeltaXCoreContext : DbContext

{

public DeltaXCoreContext()

: base("name=DeltaXCoreContext")

{

Database.SetInitializer<DeltaXCoreContext>(null);

}

public DbSet<Currency> Currencies { get; set; }

public DbSet<CoreBusinessProfile> BusinessProfiles { get; set; }

.

.

protected override void OnModelCreating(DbModelBuilder modelBuilder)

{

modelBuilder.Configurations.Add(new CurrencyConfiguration());

modelBuilder.Configurations.Add(new CoreBusinessProfileConfiguration());

.

.

}

}

However, DeltaXContext is a little special. Since we use a multi-tenant setup, we need to do some additional stuff to make the EF context map to the dynamic schemas (See this post from 2011 as well).

public class DeltaXContext : DbContext

{

// Notice the special "DbCompiledModel" parameter that we are using to instantiate the context

public DeltaXContext(int agencyId, DbCompiledModel dbCompiledModel)

: base(ContextHelper.GetConnectionString(agencyId), dbCompiledModel)

{

Database.SetInitializer<DeltaXContext>(null);

}

public DbSet<Campaign> Campaigns { set; get; }

protected override void OnModelCreating(DbModelBuilder modelBuilder)

{

modelBuilder.Configurations.Add(new CampaignConfiguration());

}

}

// Somewhere else

public DbCompiledModel CreateCompiledModel(int agencyId, SqlConnection sqlconnection)

{

string tblPreFix = agencyId <= 0 ? "" : "Agency" + agencyId.ToString();

DbModelBuilder modelBuilder = new DbModelBuilder(DbModelBuilderVersion.V4_1);

// Map the "Campaign" POCO to "[Agency1].[Campaigns]" table

modelBuilder.Configurations.Add(new CampaignConfiguration("Campaigns", tblPreFix));

var dbc = modelBuilder.Build(connection).Compile();

return dbc;

}

// Usage of DeltaXContext

using (var sqlConnection = new SqlConnection(connectionString))

{

var dbc = CreateCompiledModel(agencyId, sqlConnection);

using (var dxc = new DeltaXContext(agencyId, dbc))

{

var campaigns = dxc.Campaigns.Where(e => (new[] { 1, 2, 3 }).Contains(e.Id)).ToList();

Console.WriteLine("Got campaigns - " + campaigns.Count);

}

}

This is probably the only way to use EF in a multi-tenant setup.

When we migrated to Azure, we moved from a shared-database-schema-separated model to tenant-per-database model. This gave us the isolation we needed!

We now had hundreds of tenants. This added to the memory load - hundreds of DbCompiledModels needed to exist. And EF was a hungry cache machine! On the web application, we cached the DbCompiledModel in the app’s in-memory ObjectCache. This had it’s limitations and overheads - the cache couldn’t be shared across web app instances. And we had serious troubles serializing<->de-serializing DbCompiledModel into a Redis cache.

private readonly ObjectCache _cache = MemoryCache.Default;

public void AddCompileModelToCache(DbCompiledModel _dbCompiledModel)

{

string sessionAgencyId = HttpContext.Current.Items[Common.SessionKeys.CURRENT_USER_AGENCYID] == null

? "0"

: Convert.ToString(HttpContext.Current.Items[Common.SessionKeys.CURRENT_USER_AGENCYID]);

string contextKey = (sessionAgencyId == "0" ? _agencyId.ToString() : sessionAgencyId) + "ComipledModel";

CacheItemPolicy cacheItemPolicy = new CacheItemPolicy

{

Priority = CacheItemPriority.NotRemovable,

RemovedCallback = arguments =>

{

string message = string.Format("ContextCacheInfo - REMOVE - Key : {0} Reason : {1}",

arguments.CacheItem.Key, arguments.RemovedReason.ToString());

Log.Warn(message);

Notify(message);

}

};

Log.WarnFormat("ContextCacheInfo - ADD - Key : {0}", contextKey);

_cache.Set(contextKey, _dbCompiledModel, cacheItemPolicy);

}

Ok - so long story so far. I’d basically given up at this point because *this is the way EF works!*. There was no way to fix this.

BUT, we never give up

@Ketan, @Akshay and me also had quick discussion around moving back to a fixed schema - considering now we were using separate DBs by BP. This would mean code changes and code fixes to every single SP where a scheme was assumed - and wasn’t a simple Find + Replace problem. @Akshay, somehow, unassumingly uttered the words - “Apneko alias type functionality chaiyeh SQL side pe”. It was just a thought. We almost dispersed, and took to lunch in the next few mins feeling a bit incomplete. While we were still at the lunch table and sitting across the table - @Akshay came back with - “There’s something called synonyms in SQL Server”.

We quickly searched and found that synonyms shouldn’t impact performance and for us by now this looked like another unexmplored path.

So I got back to hacking! This time we’ll address the problem from the databse side.

Use DeltaXBp1

GO

CREATE SYNONYM [Agency0].[Campaigns] FOR [Agency1].[Campaigns]

GO

-- Test it

SELECT TOP 10 * FROM [Agency0].[Campaigns]

SELECT TOP 10 * FROM [Agency1].[Campaigns]

Use DeltaXBp2

GO

CREATE SYNONYM [Agency0].[Campaigns] FOR [Agency2].[Campaigns]

GO

-- Test it

SELECT TOP 10 * FROM [Agency0].[Campaigns]

SELECT TOP 10 * FROM [Agency2].[Campaigns]

Awesome - now we can refer to a table using either [Agency0] or [Agency#].

Next, we *cleverly* trick our EF context into talking to multiple dynamic schemas using the same DbCompiledModel!

public class DeltaXContext : DbContext

{

// Notice the special "DbCompiledModel" parameter is NO MORE!

public DeltaXContext(int agencyId)

: base(ContextHelper.GetConnectionString(agencyId))

{

Database.SetInitializer<DeltaXContext>(null);

}

public DbSet<Campaign> Campaigns { set; get; }

protected override void OnModelCreating(DbModelBuilder modelBuilder)

{

string tblPreFix = "Agency0";

modelBuilder.Configurations.Add(new CampaignConfiguration("Campaigns", tblPreFix));

}

}

// We also don't need the "CreateCompiledModel" method anymore!

Since the tenants are per database, adding a synonym with [Agency0] didn’t cause any problems/conflicts. Every database could have a [Agency0].[Campaigns] synonym. EF builds the model using the connectionString that we provide it.

So - we now only need one DbCompiledModel for every tenant, despite each database having a dynamic schema.

This fixed our memory leak issue - now the console app always had 2 instances of DbCompiledModel in the memory heap.

For a real feeler of what this means, we needed to check the memory usage of the service (and of course, ensure that synonyms worked as expected without any weird issues).

I deployed these changes onto our testing VM for the same SocialAccountSyncService. It would normally run with ~3GB of RAM (out of the 4GB on the VM). This version ram under 500mb of RAM!

[05/07/2018 5:56 PM] Amrith Yerramilli:

Deployed webapp - working as expected (saw dashboard only)

Deployed FB Sync - working super (< 130mb RAM, picked 30 threads at a time)

[05/07/2018 5:57 PM] Akshay Surve: sent a sticker - Love

<img src="/img/akshay-sticker.PNG" alt="akshay-sticker" style="height: 150px" >

[05/07/2018 5:57 PM] Amrith Yerramilli: Memory is not "growing". It's staying at ~130mb

"Export Service" is growing (270 to 295 right now, and growing. Will deploy this also and prove the point)

Production kar du ?

[05/07/2018 6:53 PM] Amrith Yerramilli: Running some trials / stress-testing on testing VM.

What's been observed so far :

- 100 * 3 tasks picked at once (across 3 workers)

- Tasks are running as expected (status 8 and 10 ones are there)

- RAM usage increased reasonably from 150mb to ~950mb (max that I saw)

- RAM usage also drops reasonably (950 to 500, then lower, then higher as tasks pick up)

- All tasks completed *just* now (650+ tasks)

- Running threads reports zero (expected)

- RAM is now at ~150mb

- _Anomalies_ : CPU spikes were observed; occasionally CPU shot up to high 90s (98/99 %).

-- Opening up resource monitor and some stuff showed "GDI PLUS" in that; this is most likely related to "Image" objects that we use in FB sync.

-- CPU spikes returned to normal in some time (most likely since task completed)

[05/07/2018 6:55 PM] Amrith Yerramilli: --

Trying the same trial using previous version of service.

[05/07/2018 7:19 PM] Amrith Yerramilli: Current ram 1.3GB

in progress - 200

in queue ~50

[05/07/2018 7:56 PM] Amrith Yerramilli: 22 ad tasks left, RAM at ~1.3gb

[05/07/2018 7:56 PM] Amrith Yerramilli: waiting for completion

[05/07/2018 7:59 PM] Amrith Yerramilli: All tasks complete. Ram is at 1.2

Taking a dump file to confirm that EF objects are occupying the space.

[05/07/2018 8:00 PM] Akshay Surve: RAM went down?

[05/07/2018 8:01 PM] Amrith Yerramilli: Not the same ratio as previous version.

RAM has been hovering at 1.2 - 1.4 for a long time now.

And currently the service is not running any tasks - it's at 1.2GB (ours was at 140mb)

[05/07/2018 8:06 PM] Amrith Yerramilli: RAM has dropped to ~1gb now. But has stayed there.

Running another 10 ad worker tasks now - expecting mem to go up from 1gb and then stay there (since new EF objects would be created)

[05/07/2018 8:07 PM] Amrith Yerramilli: >> Is there any service which needs freq restart?

Insights service

[05/07/2018 8:07 PM] Amrith Yerramilli: >> RAM has dropped to ~1gb now. But has stayed there.

Ok, went back to 1.3

So we stress tested this - increased the number of worker threads to spawn in the service to 300 (usually it was 30). To @Akshay’s surprise, all threads were spawned and processed without a hiccup!

Of course, by now, Akshay and I were hiccuping :P

So, on we go to Production

[05/07/2018 9:59 PM] Amrith Yerramilli: Yes - can see.

--

- Moving on to service deploy

[05/07/2018 10:01 PM] Amrith Yerramilli: -- Allah o akbar

-- http://dxdev-jenkins...../job/Git-AdDataDownloadService/124/console

[05/07/2018 10:17 PM] Amrith Yerramilli: -- Tasks are getting processed fine (can't see any failures, success count increasing, inqueue as well)

[05/07/2018 10:18 PM] Akshay Surve: running workers?

[05/07/2018 10:19 PM] Amrith Yerramilli: DimensionAdDataDownload max 4, running 4

FacebookAdDataDownload max 15, running 15

[05/07/2018 10:22 PM] Amrith Yerramilli: -- Feeling good/adventurous. Deploying to other 2 VMs as well

[06/07/2018 12:42 AM] Amrith Yerramilli: Stopping FB sync on

dxprod-hz-vm12

dxprod-hz-vm3

dxprod-hz-vm33

dxprod-hz-vm38

dxprod-hz-vm39

dxprod-hz-vm40

[06/07/2018 12:42 AM] Amrith Yerramilli: dxprod-hz-vm1

[06/07/2018 12:44 AM] Akshay Surve: 7 vms?

[06/07/2018 12:44 AM] Amrith Yerramilli: Itne me SMT configured hain

[06/07/2018 12:45 AM] Amrith Yerramilli: All safe stopped (there were no running tasks) :P

[06/07/2018 12:54 AM] Amrith Yerramilli: vm28 also is running ad-data ?

10GB assigned, 9GB in use

[06/07/2018 12:54 AM] Amrith Yerramilli: SMT says ad-data

[06/07/2018 12:54 AM] Amrith Yerramilli: AdsConsumer

AdWordsAdDataDownload

AdwordsCampaignDataDownload

AdWordsVideoAdDataDownload

BingAdDataDownload

[06/07/2018 12:56 AM] Krishna HS: considering only fb right?

[06/07/2018 12:56 AM] Amrith Yerramilli: I'm Batman

[06/07/2018 1:00 AM] Akshay Surve: @Amrith Y wants to hear deploy all services 🤣

[06/07/2018 1:02 AM] Akshay Surve: TGIF-already! Lets go easy on other services

[06/07/2018 2:04 AM] Amrith Yerramilli: saw a one-off "Cannot find the object "Agency1394.AdDailyFacts-Hourly-20180705-19"" in AdsConsumer, however, must have been due to kill service or something

Multiple AdsConsumer have Completed successfully after this isolated error.

--

ALL GOOD

[06/07/2018 2:05 AM] Amrith Yerramilli: Pretty close to signing off for tonight.

[06/07/2018 2:11 AM] Amrith Yerramilli: --

SPM Saved Searches

AY - EF DeltaX-AdDataDownload - Failures

http://<KIBANA_URL>/goto/XXXX

AY - EF DeltaXFacebookSyncService - Failures

http://<KIBANA_URL>/goto/YYYY

Day 3 - July 6th, 2018

[06/07/2018 2:25 AM] Amrith Yerramilli: sent an image entity-framework-now-just-stop-right-there.jpg

[06/07/2018 8:09 AM] Amrith Yerramilli: No abnormal errors in the last 12 hours. 😎 Just checked.

[06/07/2018 9:13 AM] Krishna HS: Yaaay!!

[06/07/2018 10:13 AM] Akshay Surve: dxprod-hz-vm28

Max - 14GB

Assigned - 1.4GB

dxprod-hz-vm40

Max - 14GB

Assigned - 1.3GB

dxprod-hz-vm38

Max - 8GB

Assigned - 1.5GB

The End.

This story is verbose but covers a range of topics and more importantly tries to capture our essence and grit when it comes to solving problems at the ad-tech scale!